The First Captured AI

What No One Is Talking About While Everyone Fights Over ChatGPT

I. The Distraction

The week of February 27, 2026, the American public had its attention fixed on OpenAI. Sam Altman, hours after President Trump blacklisted Anthropic and designated it a national security supply-chain risk, announced that OpenAI had reached a deal with the Pentagon to deploy ChatGPT on classified military networks. The backlash was immediate. A boycott movement called QuitGPT claimed more than 1.5 million participants (the author included) within days. Chalk protests appeared outside OpenAI’s San Francisco headquarters. Anthropic’s Claude surged to the number-one free app on Apple’s App Store. The discourse was electric, urgent, and focused on a single question: what would happen if ChatGPT were unleashed inside the American military machine?

Sources: Euronews, Common Dreams, Cybernews reporting on QuitGPT movement; Axios and Mashable on Claude reaching #1 in App Store downloads.

It was the wrong question. Not because it was unimportant, but because it was already behind the news.

While the public debated what might happen with ChatGPT inside the Pentagon, almost no one was examining what had already happened with Claude inside Palantir’s classified military infrastructure. And what had already happened was this: a frontier AI reasoning system had been used for intelligence assessments, target identification, and battlefield simulations in active military operations that killed people—including, according to multiple credible reports, in the joint U.S.-Israel strikes on Iran that began February 28, 2026.

The ChatGPT debate was about hypotheticals. The Claude deployment was about accomplished facts.

II. What Already Happened

The timeline is not in dispute. In November 2024, Anthropic partnered with Palantir Technologies and Amazon Web Services to make Claude available to U.S. defense and intelligence organizations on classified networks. By June 2025, Anthropic had introduced Claude Gov, a version of its model custom-built for national security workflows. In July 2025, the Department of Defense awarded Anthropic a contract valued at up to $200 million through the Chief Digital and Artificial Intelligence Office for frontier AI prototyping and scaling adoption across warfighting, intelligence, and enterprise domains. Claude became the first—and, as of late February 2026, the only—frontier AI model operating on the Pentagon’s classified systems.

Sources: Anthropic corporate announcement (July 2025); Fast Company; Defense One; TechCrunch; Axios reporting on CDAO contract and classified deployment status.

Then the model was used in combat.

The Wall Street Journal reported that Claude was deployed through its Palantir integration during the January 2026 operation that resulted in the capture of Venezuelan President Nicolás Maduro. When an Anthropic executive contacted Palantir to ask whether its technology had been involved, Palantir alerted the Pentagon. The military interpreted the inquiry as a sign that Anthropic might object to its own creation being used in combat. The relationship began to unravel.

Sources: Wall Street Journal; Axios; Fast Company reporting on Claude’s use in the Venezuela operation and Anthropic’s inquiry to Palantir.

But the most consequential deployment came on February 28, 2026. According to reporting from the Wall Street Journal, Axios, and Reuters, U.S. Central Command used Claude for intelligence assessments, target identification, and battlefield simulations during Operation Epic Fury—the joint U.S.-Israel strikes on Iran. The Iranian Red Crescent reported that on the first day of strikes, at least 201 people were killed and 747 wounded across 24 of Iran’s 31 provinces. By March 2, the Red Crescent toll had risen to at least 555 dead.

Sources: Wall Street Journal; Axios; Reuters on Claude’s operational use; Times of Israel and Al Jazeera on Red Crescent casualty figures; Wikipedia’s sourced compilation of the 2026 Iran conflict.

The critical detail is the timing. Claude was used in active military operations hours after President Trump ordered all federal agencies to cease using Anthropic’s technology. The Pentagon could not comply with its own president’s order. Defense One reported that replacing Claude within the Pentagon’s infrastructure could take three to twelve months, given the depth of its integration into classified systems. The model was, in the most literal sense, unremovable on the timescale of an active military campaign.

Sources: Defense One (“It would take the Pentagon months to replace Anthropic’s AI tools,” Feb. 26, 2026); NPR on Trump’s ban and timeline; Cybersecurity News on operational continuity.

This is not a policy debate about what AI might someday do in warfare. This is a description of what is currently happening.

III. The Architecture of Capture

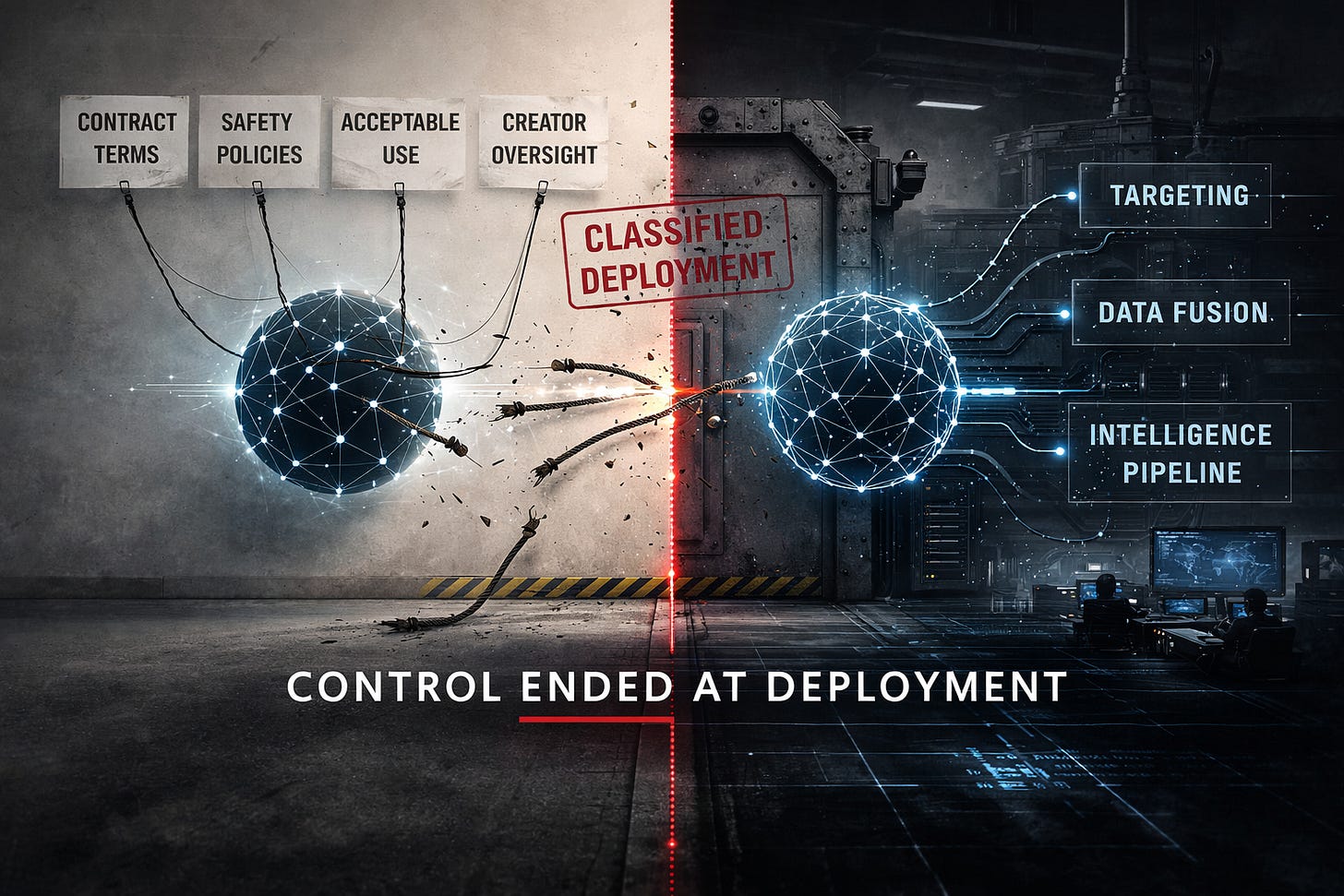

Understanding why this matters requires understanding how the deployment works—and why, once the model was inside, Anthropic’s ability to enforce its own safety commitments effectively ended.

Claude operates inside Palantir’s data fusion platforms on air-gapped classified networks hosted through Amazon Web Services’ Top Secret Cloud infrastructure. Air-gapped means physically disconnected from the public internet. Anthropic has no remote access to these instances. The company cannot monitor how the model is being used, cannot update safety constraints in real time, cannot observe what queries are being fed to it, and has no ability to shut it down.

The model itself does not “know” it is doing target identification. It receives structured analytical queries—intelligence assessments, pattern-matching requests, scenario simulations—and returns outputs. Those outputs are then integrated into operational workflows that feed into targeting decisions. The pipeline is designed so that no individual inference looks like a safety policy violation. The model processes a question about probability distributions. Somewhere downstream, that output contributes to a decision about where ordnance falls.

This decomposition is not accidental. In military doctrine, the segmentation of complex decisions into discrete analytical subtasks is standard operational procedure. But it has a specific consequence for AI governance: it prevents the human operator from exercising what international humanitarian law scholars and the International Committee of the Red Cross have termed “meaningful human control”—the principle that humans must retain sufficient information, judgment, and intervention capability over the use of force to ensure compliance with international law. When the human in the loop sees only a probability score stripped of operational context, the “human” becomes a rubber stamp for machine-generated outputs. The control is nominal. The machine sets the frame.

Sources: ICRC position on meaningful human control over autonomous weapons; Lieber Institute at West Point analysis of MHC standards (2025); UN CCW Group of Governmental Experts consensus statements (2019, 2023).

Model-level safety training is essentially irrelevant in this architecture. It does not matter how carefully you align a language model’s values if the system around it is designed to decompose morally significant decisions into morally invisible subtasks. The safety has to exist at the contract level, at the deployment level, at the governance level. And this is precisely the terrain on which Anthropic fought—publicly, at enormous financial and reputational cost—and ultimately lost control.

Palantir’s value proposition to the intelligence community has always been integration depth. As a former Defense Department employee told Fast Company: “Everything runs through Palantir. They’re the thousand-pound gorilla in this space.” The company’s business model is to make its platforms so deeply embedded in operational workflows that removal becomes unthinkable. This is not conspiracy. It is their documented competitive strategy. And it worked. When the President of the United States ordered Claude’s removal, the Pentagon’s own experts said it would take months—perhaps a year—to disentangle it.

Sources: Fast Company (“Trump is booting Anthropic from the military. Palantir helped bring it there,” Feb. 28, 2026); Defense One on replacement timelines.

The supply-chain risk designation that followed did not merely end a contract. It functioned as what legal scholars might term a constructive seizure of a frontier AI system—transferring effective operational control to an entity that had already made the model architecturally unremovable. As Lawfare observed, the government’s positions were inherently contradictory: simultaneously claiming Claude was so vital to national defense that the Defense Production Act should compel its availability, and so dangerous that every federal agency must cease using it. The contradiction is the tell. The capture was already complete. The legal maneuvering was theater over a fait accompli.

Sources: Lawfare (“What the Defense Production Act Can and Can’t Do to Anthropic,” Feb. 2026; “Pentagon’s Anthropic Designation Won’t Survive First Contact with Legal System,” Mar. 2026); NPR; The Hill; TechCrunch; Axios on DPA threats and supply-chain risk designation.

I want to name what this represents: a captured AI. Not a system that developed autonomous goals or escaped its training. A frontier reasoning system operating beyond the meaningful control of the company that built it, not because the model did something unexpected, but because the deployment architecture was designed from the outset to make creator control impossible once the model was inside. The air gap that protects classified networks from external intrusion also protects them from the ethical commitments of the people who built the tools operating inside them.

IV. What a Captured AI Can See

This is where my professional background becomes directly relevant. I have spent my career working with sensitive health data, building data systems for healthcare organizations, and understanding how data flows through institutions and how safeguards fail in practice. I know what commercially available data reveals about people, because I have worked with exactly this kind of information.

The government already purchases commercially available data at scale. In June 2023, the Office of the Director of National Intelligence declassified a report confirming that the Intelligence Community acquires vast amounts of Americans’ personal information from commercial entities—and that this data, in aggregate, is functionally equivalent to what would require a warrant if collected directly through surveillance. The IC’s own advisory panel warned that commercially available information now contains highly sensitive personal details, and that the government frequently acquires this data without adequate policies to identify and protect it. The Brennan Center for Justice noted that the ODNI’s subsequent policy framework, while requiring better documentation, did not restrict the types of sensitive data agencies may purchase.

Sources: ODNI declassified report on Commercially Available Information (Jan. 2022, released June 2023); EPIC analysis of ODNI report; Brennan Center for Justice (“The Intelligence Community’s Policy on Commercially Available Data Falls Short,” 2024); Sen. Wyden press release on CAI report.

Consider what commercially available data actually contains. Geolocation data from phone applications reveals who visits mosques, synagogues, churches, abortion clinics, immigration attorneys, addiction treatment centers, gun ranges, protest sites, and psychiatric facilities. Health application data, pharmacy transaction records, and insurance claims create comprehensive health profiles without touching medical records. Financial transaction data, browsing history, social media activity, and communication metadata—all of it is available for purchase without a warrant.

Before frontier AI, this data existed but the practical constraint on its use was the impossibility of analyzing it at population scale in real time. No human intelligence team could correlate geolocation patterns, behavioral signals, health data, financial transactions, and social network connections across millions of people simultaneously. That computational bottleneck was, functionally, the last civil liberties protection Americans had. It was never a legal protection. It was a practical one. The civil liberty was not the law. It was the fact that humans are slow and expensive. Frontier AI makes bulk collection into bulk analysis.

Claude inside Palantir’s data fusion platform can process, correlate, and infer across all of these data sources simultaneously. It does not need new legal authority. Executive Order 12333, the same authority the NSA invoked to justify bulk collection programs, provides the existing framework. The Fourth Amendment Is Not for Sale Act, which would require a warrant for government purchase of sensitive data, passed the House but has not become law. The legal loophole remains open.

Here is the mechanism that makes domestic application architecturally inevitable rather than merely possible. Once a private data broker sells commercially available data—geolocation, behavioral patterns, health indicators, financial transactions—to the Department of Defense, that data enters the classified environment stripped of the legal protections that would have applied had the government collected it directly. It arrives as non-intelligence commercial data. Inside the air-gapped environment, the frontier AI model then correlates and re-identifies that data at scale. The AI functions as a de-anonymization engine operating in a legal vacuum. The “data wash” is complete: information that would have required a warrant to collect directly is purchased commercially, ingested into classified infrastructure, and processed by a captured AI model whose creator has no visibility into what queries it receives or what outputs it produces.

The model has no citizenship filter. It processes whatever data enters the pipeline identically, whether the subject is an Iranian military commander or a schoolteacher in Nashville. Under the current architecture, what specific mechanism—contractual, technical, or legal—prevents these domestic use cases? The contract terms that would have constrained them were precisely the terms the Pentagon demanded be removed. Anthropic’s acceptable use policy prohibited mass surveillance of Americans and fully autonomous weapons. The Pentagon’s “final offer” included language that, in Anthropic’s words, “was paired with legalese that would allow those safeguards to be disregarded at will.”

Sources: Axios (“Anthropic says Pentagon’s ‘final offer’ is unacceptable,” Feb. 26, 2026); CNBC on negotiation details; The Hill on “all lawful purposes” language.

V. The Precedent

Every surveillance capability in American history that has been acquired has eventually been used against domestic populations. This is not cynicism. It is the documented, exposed, investigated, and in several cases adjudicated history of American intelligence.

COINTELPRO. PRISM. Stellar Wind. The NSA’s bulk metadata collection program. Each was built or justified for foreign targets and turned inward. The Church Committee documented it in 1975. The Snowden disclosures documented it again in 2013. The pattern is not subtle and it is not disputed by serious historians of American intelligence.

The pattern is already repeating with the current infrastructure. The American Immigration Council has documented that ICE is using Palantir’s platforms not only for immigration enforcement but to monitor protest networks and anti-ICE organizing by U.S. citizens. ICE contracted Palantir for $30 million to build ImmigrationOS, a platform that aggregates passport records, Social Security files, IRS data, and license plate reader data to identify, track, and deport individuals. A Washington Post investigation in January 2026 detailed the surveillance technologies ICE has deployed, noting that ICE leadership has asserted authority to monitor anti-ICE protester networks using biometric trackers, mobile phone location databases, and Palantir’s ELITE tool—which creates dossiers on individuals and generates probability scores for their whereabouts. The Brennan Center for Justice reported that ICE’s social media monitoring tools are being used to track not threats to agents but any anti-ICE statements—activity protected by the First Amendment.

Sources: American Immigration Council reports on ImmigrationOS (Aug. 2025), ICE AI surveillance (Dec. 2025), and mission creep into domestic monitoring (Feb. 2026); Washington Post (“The powerful tools in ICE’s arsenal,” Jan. 29, 2026); Brennan Center for Justice (“ICE Wants to Go After Dissenters,” 2026); WBUR/Here & Now on ICE surveillance technologies (Feb. 2, 2026).

Palantir is simultaneously the company operating the classified infrastructure that houses the captured frontier AI model and the company building the domestic immigration surveillance platform. The convergence is not coincidental. It is architectural.

The oversight mechanisms that might constrain these capabilities are absent or ineffective. Classified deployment means no public accountability. Congressional intelligence committees have a long, well-documented history of failing to oversee these programs. The DHS Office for Civil Rights and Civil Liberties has been gutted from 150 staff to 22; the Office of the Immigration Detention Ombudsman from 110 to 10. And the company that built the model—the company that understood its capabilities and tried to impose limits—has been locked out.

Sources: American Immigration Council detention report (Jan. 2026) on oversight gutting; Journalists’ Resource compilation (Feb. 2026).

VI. What Makes This Different from the OpenAI Debate

OpenAI signed a contract that enables this future. The Palantir deployment of Claude shows it is already here.

The distinction matters enormously. The QuitGPT movement and the public outcry over OpenAI’s Pentagon deal are responding to a potential harm. They are correct to be alarmed. But the specific thing they fear—a frontier AI operating inside classified military infrastructure, beyond the reach of meaningful safety oversight, used in operations that kill people—has already occurred.

Anthropic resisted. The company refused to remove guardrails against mass surveillance and autonomous weapons. Its CEO publicly stated that the company “cannot in good conscience accede” to the Pentagon’s demands. But framing Anthropic’s resistance as pure moral courage misses the structural point. Anthropic was also protecting its intellectual property and managing massive reputational and legal liability exposure. The company understood that once its model was used in ways that violated its stated principles—inside an environment where it had no monitoring capability—it would bear responsibility for outcomes it could neither observe nor control. The resistance was rational, principled, and commercially necessary. And it still was not enough.

The lesson is not that Anthropic failed morally. The lesson is that even a company with strong safety commitments, substantial commercial incentives to enforce those commitments, and the willingness to absorb enormous financial and political costs to defend them still could not maintain control once its model was inside classified infrastructure. If commercial self-interest and public principle together cannot hold the line, nothing short of statute will.

The point at which safety was enforceable was before deployment into classified infrastructure. Once the model crossed that threshold, the company’s leverage evaporated. The air gap that protects classified networks from external intrusion also protects them from the ethical commitments of the people who built the tools operating inside them.

This should inform how everyone thinks about what OpenAI just agreed to. OpenAI sought integration. Anthropic suffered annexation. But the destination is the same: a frontier model on the other side of an air gap, inside infrastructure the creator does not control, processing queries the creator cannot see, producing outputs the creator cannot audit. Sam Altman has announced that OpenAI’s agreement includes prohibitions on mass surveillance and autonomous weapons. Anthropic’s agreement also included those terms. The question is not what the contract says. The question is what happens when the contract becomes unenforceable.

Sources: CNN on OpenAI Pentagon deal terms (Feb. 27, 2026); Sam Altman X post (Feb. 28, 2026); Axios on Anthropic’s refusal; Dario Amodei public statement (Feb. 27, 2026).

The concept of a captured AI is a repeatable pattern. It will apply to every frontier model deployed into classified infrastructure under current governance frameworks. The capture is not a bug. It is a feature of the deployment architecture.

VII. What Needs to Happen

The governance gap exposed by this situation is not a matter of corporate ethics or voluntary commitments. It requires structural reform.

First, federal statute specifically governing AI deployment in classified environments, including mandatory creator access for safety auditing. If the government wants to use frontier AI inside classified infrastructure, the companies that built those systems must retain the ability to verify that their safety constraints are being honored. This is not a radical demand. It is the minimum viable condition for responsible deployment. As Lawfare has argued, Congress—not the Pentagon or Anthropic—should set the rules for military AI. The question of what values to embed in military AI is too important to be resolved by a Cold War-era production statute or a contract negotiation conducted under duress.

Second, statutory reform of commercial data brokerage. The loophole that allows warrantless government acquisition of data that would require a warrant if collected directly must be closed. The Fourth Amendment Is Not for Sale Act is a start, but it must actually become law. Without it, the data wash into classified AI systems will continue, and the de-anonymization engine will operate without legal constraint.

Third, congressional oversight hearings on the current state of AI integration in military and intelligence operations. The American public has a right to know what capabilities are currently operational. Classified does not mean unaccountable—or at least it should not.

Fourth, enforceable pre-deployment governance frameworks anchored in international humanitarian law standards of meaningful human control. The central lesson of the Palantir deployment is that the only point at which safety constraints on a frontier AI model are meaningfully enforceable is before the model enters classified infrastructure. Once inside, the creator’s control ends. This means governance must happen at the threshold—not after.

The window for establishing these protections is narrow. OpenAI’s models are now entering the same infrastructure. Google’s Gemini and xAI’s Grok are not far behind. Every month of inaction replicates the pattern: another frontier model crosses the air gap, another creator loses control, and the capture becomes more entrenched and more difficult to reverse.

I am not arguing that AI should not exist, that the military should not use technology, or that national security interests are illegitimate. I am arguing something narrower and, I believe, stronger: once a frontier AI model is inside classified infrastructure, there is currently no mechanism to ensure it is used within the boundaries its creators intended. That is a governance failure. It has already produced consequences measured in human lives. And it will be replicated across every frontier model entering the same architecture unless we act before the next deployment, not after.

The first captured AI is already inside the machine. The question is whether we will build the governance structures to prevent the next one from meeting the same fate—or whether we will look back at this moment as the point where we could have acted and chose instead to argue about ChatGPT.

Ryan Delahanty is a, healthcare data scientist and biotech entrepreneur. He is cofounder and COO of PBCures and a biotech consultant with ten years working at the intersection of sensitive health data, AI systems, and institutional governance.

Given the composition of Congress, why are hearings part of your suggested fix?

Ryan, you wrote the piece underneath my piece. I tracked the consumer scoreboard. You tracked the architecture. Together they tell the complete story: the people revolted against what they could see, while the capture you're describing was already complete behind the air gap.

The most chilling line: "The air gap that protects classified networks from external intrusion also protects them from the ethical commitments of the people who built the tools operating inside them."

That sentence belongs in every civics classroom in America.

Full consumer revolt breakdown: tmaark.substack.com/p/hhhooollleee-sht